Mission Assurance Observability Framework

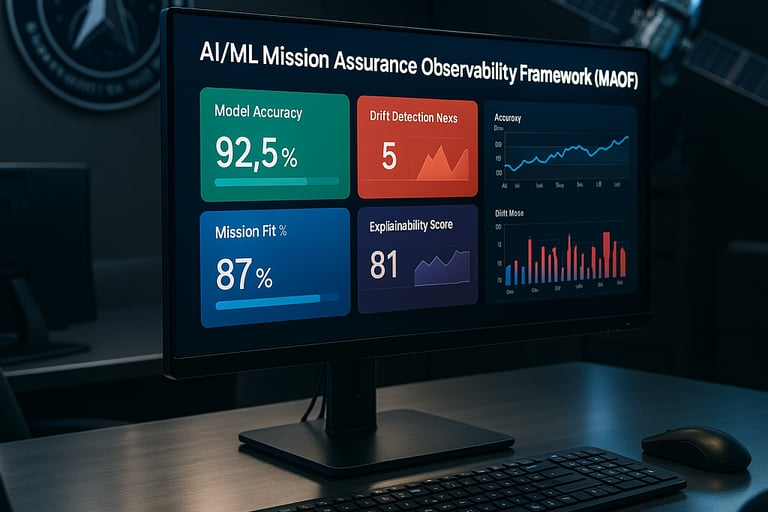

The Mission Assurance Observability Framework (MAOF) is designed to ensure that AI/ML models meet operational requirements and deliver real return on investment (ROI) by continuously measuring their mission relevance, technical performance, and operational effectiveness across five key pillars:

Model Mission Relevance (MMR)

Technical Performance Metrics (TPMs)

Operational Effectiveness Metrics (OEMs)

Explainability and Traceability (E&T)

Feedback Loop and Continuous Learning (FLCL)

This framework would use Space Force Digital Engineering practices to build model observability into DevSecOps pipelines while ensuring compliance with RMF 2.0, Zero Trust, and CDAO policies.

The AI/ML Mission Assurance Observability Framework from Modular Misfits is a practical, operational solution designed to help organizations—especially those in defense and national security—understand whether their AI and machine learning tools are actually working and delivering results.

At its core, the framework gives visibility into how well AI models align with mission objectives, how they perform technically, and how much real-world impact they’re delivering. It includes a combination of proven open-source and commercial tools that monitor for model accuracy, data drift, and bias, while also offering built-in dashboards for explainability and compliance with ethical AI standards.

Unlike theoretical approaches or one-off solutions, this framework is already integrating with existing DevSecOps environments and can be deployed in classified or air-gapped settings. It’s not just about watching metrics—it’s about ensuring AI tools are trustworthy, auditable, and improving over time with user feedback. The tools powering it are accessible, vetted, and modular, making it adaptable to a range of operational environments. This isn’t vaporware—it’s built for the real world.

The AI/ML Mission Assurance Observability Framework (MAOF) is structured around five key pillars that ensure artificial intelligence and machine learning tools deliver measurable, mission-aligned value. Each component of the framework is designed to evaluate a different aspect of model effectiveness, from technical performance and operational impact to explainability and ongoing improvement. The table below outlines these pillars—Model Mission Relevance, Technical Performance Metrics, Operational Effectiveness Metrics, Explainability and Traceability, and Feedback Loop and Continuous Learning—along with their purpose, example metrics, and how they contribute to the overall assurance of AI/ML systems in high-stakes environments.